What are diffusion models in practical terms? Generative systems learn to create structured outputs by starting with random noise and gradually transforming it into a coherent result. In modern AI, this typically involves producing images, though the same concept applies to video, audio, 3D assets, and scientific data.

A diffusion model works by learning two connected ideas: how data can be corrupted step by step, and how that corruption can be reversed. That simple setup explains why these systems are both powerful and unusually stable compared with many earlier approaches. The same underlying logic is increasingly relevant in production workflows that combine structured digital assets with AI-assisted generation.

This guide breaks the topic into clear parts: definition, mechanism, main architectures, major variants, real-world uses, strengths, limits, and the reason these methods now sit at the center of image synthesis and broader generative work.

What Are Diffusion Models?

A clean diffusion model definition is this: a generative model learns to reconstruct data from noise by reversing a gradual corruption process. Instead of generating a final output in one leap, it builds order through a sequence of denoising steps.

To define diffusion models in plain language, think of an image being slowly buried under static and then rebuilt in reverse. The model studies that reversal until it can start with pure noise and move toward a realistic sample. That is especially easy to appreciate in commercial image pipelines where teams combine classic rendering inputs with structured visual references to keep geometry, materials, and composition under tighter control.

The concept often sounds abstract at first, especially to beginners. Once the reverse-noise idea is clear, the rest of the field becomes much easier to follow.

A useful way to explain it is to call it a generative system that learns structure by reversing corruption. That framing is more intuitive than starting with equations because it matches what the model actually does during sampling.

How Diffusion Models WorkHow Diffusion Models Work

At a high level, these models learn a gradual path between clean data and noise, then use that learned structure to reverse the process during generation. Training teaches the system how to recognize and remove noise at different stages rather than reconstructing a finished output in a single step.

At the center of that loop is the diffusion process, which defines how noise is added in small increments. Each step is mild on its own, but enough steps eventually turn a clear image into something nearly indistinguishable from random static.

Forward Diffusion ProcessForward Diffusion Process

In the forward diffusion process, the model does not invent anything yet. It takes real training examples and adds controlled noise over many timesteps until the original signal is largely lost.

That staged corruption matters because it creates a learnable path between structure and randomness. A single destructive jump would be harder to reverse, while gradual corruption produces intermediate states the model can study.

Reverse Diffusion ProcessReverse Diffusion Process

The core act of generation happens through reverse diffusion, where the model starts from noise and removes uncertainty one step at a time. Each pass is only a partial cleanup, but the chain eventually reveals a plausible sample. In design-heavy workflows, that kind of iterative refinement is especially useful when detail, atmosphere, and controlled variation all matter.

This is why reverse diffusion is often described as an iterative process rather than a direct one. The output emerges progressively, which helps explain both the high quality and the slower speed associated with these systems.

Training and Noise PredictionTraining and Noise Prediction

A noise prediction model is trained to estimate the noise present in a corrupted sample at a given timestep. Once the model can predict that disturbance accurately, it can subtract or counteract it and move the sample toward a cleaner state.

That training setup avoids asking the network to memorize a finished image outright. Instead, it learns local correction rules across many stages, which is a major reason this family of methods is comparatively stable.

Key Components of Diffusion ModelsKey Components of Diffusion Models

A typical diffusion model architecture combines timestep conditioning, a denoising backbone, and a scheduler that controls the strength of corruption across training and sampling. The architecture is not defined by one single network shape, but by the way these parts work together.

The noise schedule determines how many random signals are introduced at each step. If the schedule is too weak, the model may not learn an effective reversal process. However, if it is too aggressive, the intermediate states become unnecessarily difficult to recover.

A neural network diffusion model often uses a U-Net-like backbone because that design preserves spatial detail while mixing local and global context. Skip connections help the network keep track of fine structure during repeated denoising passes.

Time embeddings are another key ingredient. They tell the network which stage of corruption it is looking at, allowing the same model to behave differently at early, middle, and late denoising steps.

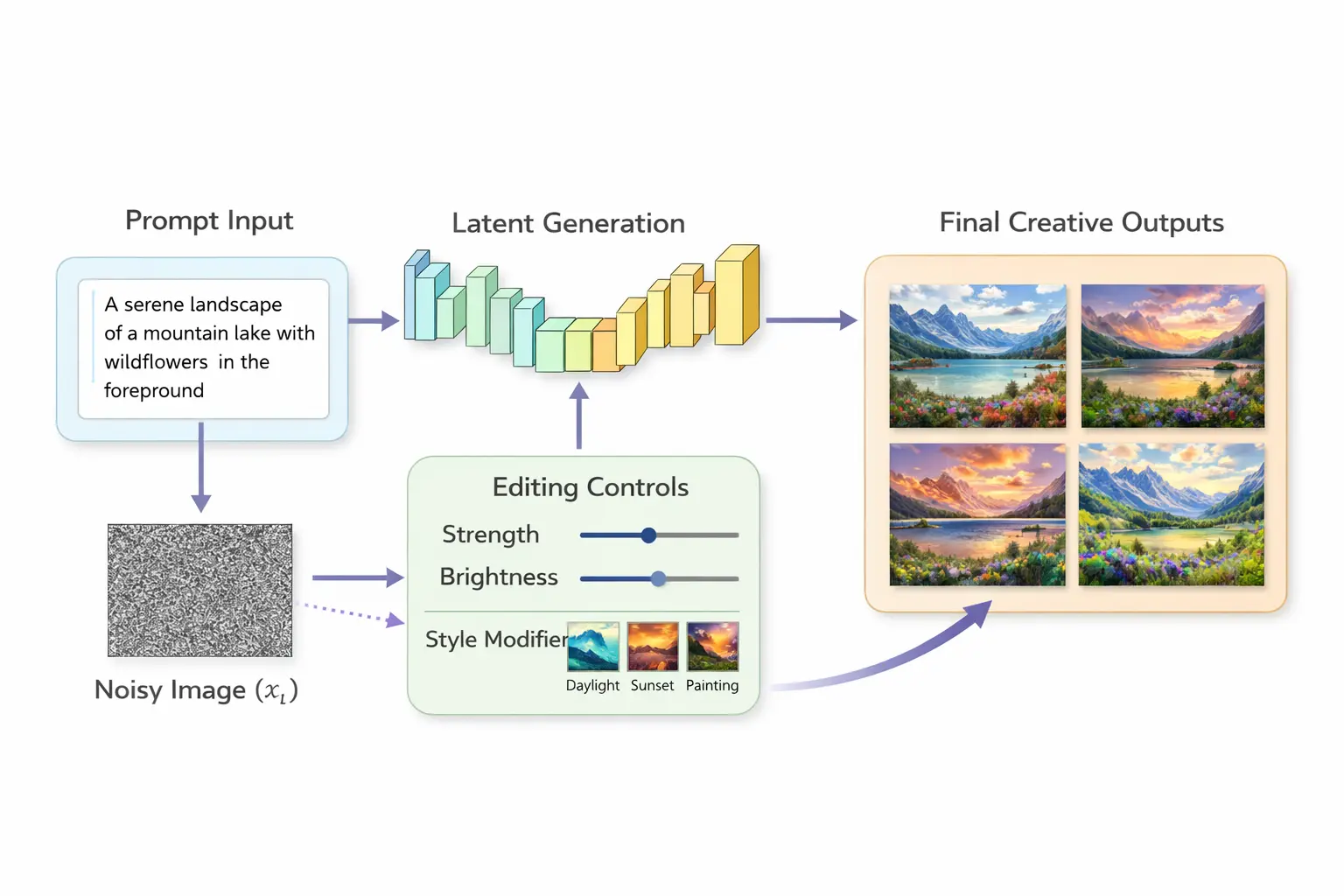

Conditioning is also common. Text prompts, class labels, masks, depth maps, or reference images can guide the generation path so the output follows specific instructions rather than drifting toward an unconstrained sample. That practical need for control is also why industrial teams still depend on structured visual pipelines when accuracy cannot be left to chance.

Many practitioners also treat the full pipeline as a diffusion system, not just a single model checkpoint. Scheduler choices, conditioning methods, guidance strength, and latent decoding all affect the final result as much as the base network does.

Types of Diffusion Models

The main types of diffusion models differ less in core principle than in representation, parameterization, and training emphasis. They all use progressive corruption and recovery, but they apply that idea in different spaces and with different objectives.

One important branch is diffusion probabilistic models, which formalized the now-familiar denoising framework in a probabilistic setting. Another major branch moved generation into compact latent spaces, reducing computational cost without giving up the core logic.

Some researchers use the phrase diffusion modeling to describe the broader method rather than one specific architecture. That distinction matters because the field now includes multiple families that share the same denoising intuition.

Denoising Diffusion Probabilistic ModelsDenoising Diffusion Probabilistic Models

DDPM popularized the modern training recipe for iterative denoising in image generation. It gave the field a practical, teachable template for how gradual noise addition and learned reversal could produce high-quality samples.

The term diffusion probabilistic models is still useful here because it points to the statistical foundation behind the sampling path. Even when later systems become more efficient, this family remains the conceptual baseline.

Latent Diffusion ModelsLatent Diffusion Models

Unlike traditional denoising methods, latent diffusion models shift the computational process from raw pixel space into a compressed latent representation. This change dramatically reduces memory and computing requirements, making large text-to-image systems more widely usable.

Instead of cleaning millions of pixel values directly, latent diffusion models operate on a smaller internal code produced by an encoder. After denoising is complete, a decoder converts that latent result back into an image. A related visual reconstruction approach appears in Gaussian splatting, which is different from diffusion but similarly reflects the industry push toward more efficient scene representation and synthesis.

Score-Based ModelsScore-Based Models

The focus of score-based diffusion models is learning how data density changes around noisy samples. In simpler terms, they estimate how to move a sample toward more likely regions of the data distribution.

A helpful way to view this family is as another expression of diffusion modeling, where denoising can be interpreted through score estimation. The math differs in presentation, but the intuition still revolves around recovering structure from noise.

Applications of Diffusion ModelsApplications of Diffusion Models

The applications of these models extend far beyond image generators alone. In commercial workflows, this often overlaps with broader 3D visualization pipelines used to shape how products, spaces, and concepts are presented before final production.

They also belong to the broader class of generative AI models, but they stand out because they balance flexibility, quality, and controllability unusually well. That combination has made them attractive across research labs, product teams, and creative workflows.

Image GenerationImage Generation

In image generation AI, diffusion-based methods became dominant because they handle detail, texture, and prompt alignment more reliably than many earlier systems. That is why tools built for illustration, concept art, mockups, and synthetic photography often depend on this approach.

A well-known practical example is Stable Diffusion, which brought scalable text-to-image generation into an open ecosystem. Earlier systems such as glide diffusion also helped establish the idea that prompt-guided denoising could produce convincing visual results. Today, discussions about the strongest AI rendering tools usually revolve around speed, control, editability, and how much genuine production value they offer beyond novelty.

Editing tasks matter as much as pure creation. Image-to-image translation, inpainting, outpainting, style transfer, and controlled composition all fit naturally into the same denoising framework.

Creative and Design Use CasesCreative and Design Use Cases

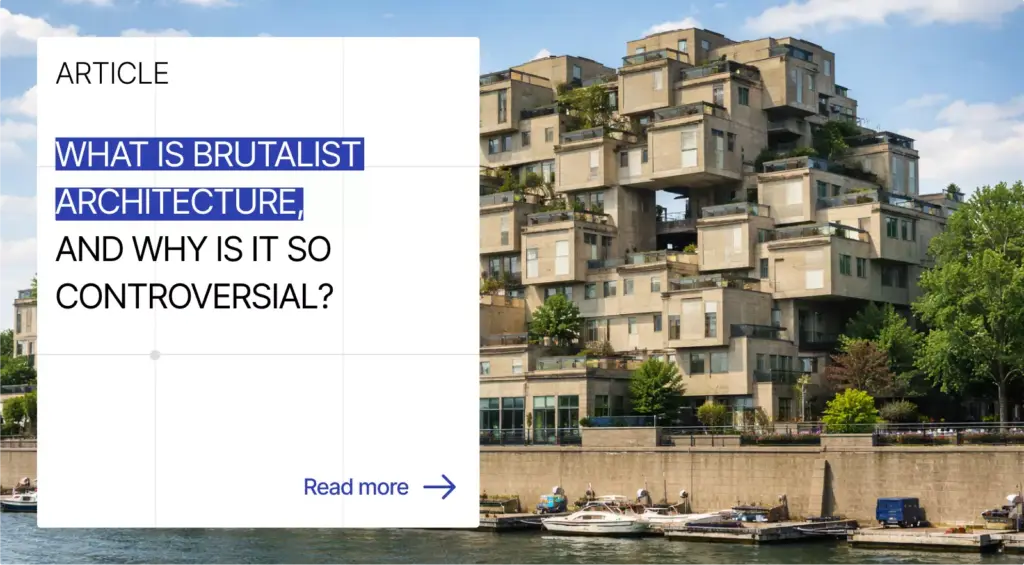

Creative production is one of the most visible use cases. Designers use them to explore layouts, art directors use them to test visual directions, and architects use them to generate references, mood imagery, and conceptual variations that can later inform architectural rendering.

Because these systems belong to the broader family of generative AI models, they can also be embedded into workflows rather than treated as standalone toys. Teams now use them for ideation, asset variation, rapid prototyping, and assisted content development. That shift also explains why the discussion around CGI vs AI is no longer about replacement, but about where physically grounded production and AI-assisted generation can support each other.

The same logic extends beyond still images. Video models generate temporal sequences, audio models synthesize speech or sound textures, and scientific teams use denoising-based generation to propose structures or fill in missing data.

A production stack may also include a diffusion system for prompt parsing, safety filtering, guidance control, latent decoding, and postprocessing. In practice, end-user quality depends on that surrounding pipeline as much as on the base denoiser.

How Businesses Use Diffusion Models in Marketing

The value of diffusion-based generation for commercial teams is not limited to technical novelty or experimental visuals. In practice, it can support faster campaign development. For example, it can help marketers produce multiple concept directions before a full shoot, build early ad creatives for internal review, and test visual angles for different products, audiences, and seasonal launches. This is especially useful when teams need quick marketing visuals for presentations, drafts of paid media, landing pages, or early e-commerce testing, as it eliminates the need to wait for a complete production cycle.

Another practical advantage is localization and personalization at scale. A single creative idea can be adapted into multiple visual variants for different regions, languages, customer segments, or platform formats while preserving the same campaign logic. In that sense, a diffusion model is often most useful not as a replacement for production, but as a speed layer added before or alongside it. It helps teams move faster in concepting, variation, and pre-production for social content, launch materials, and merchandising assets, while final execution can still rely on traditional design, CGI, photography, or art direction when precision matters most.

Advantages of Diffusion ModelsAdvantages of Diffusion Models

The main advantages of diffusion models come from output quality, training stability, and flexible conditioning. They tend to produce rich detail and coherent textures without relying on the fragile adversarial balance required by GAN training.

One of their clearest strengths is their consistency across many generation tasks. The same general framework can support unconditional synthesis, text-guided generation, editing, inpainting, and multimodal conditioning.

Another of the key benefits of diffusion models is control. Because generation unfolds step by step, users and developers can steer the process with prompts, masks, guidance scales, structural priors, or intermediate constraints.

These systems are also strong at sample diversity. While no model family is immune to bias or collapse-like behavior under poor conditions, denoising-based training generally avoids some of the sharp failure modes that made earlier generative workflows harder to scale.

Limitations of Diffusion ModelsLimitations of Diffusion Models

The central limitations of diffusion models start with speed. Iterative denoising can require many steps, which means generation is often slower than one-shot or near-one-shot alternatives.

One of their biggest limitations is computational demand. Training requires large datasets and significant hardware, and even inference can become expensive when resolution, sequence length, or guidance complexity increases.

Another challenge is operational complexity. Scheduler choice, sampler choice, prompt weighting, guidance settings, and latent-space tricks can all influence results, which raises the barrier for teams that need predictable production behavior. Similar tradeoffs appear across many production tools, where visual quality, workflow fit, and performance rarely align perfectly in one system.

These systems also inherit broader generative issues. They can reproduce dataset bias, create artifacts, misread prompts, and generate convincing but incorrect content when the conditioning signal is weak or ambiguous.

What Business Teams Should Evaluate Before AdoptionWhat Business Teams Should Evaluate Before Adoption

Before adopting these systems, business teams should assess more than image quality alone. The real question is whether the tool can maintain brand consistency, fit the approval workflow, and integrate smoothly with the existing CGI, 3D, or design pipeline. Legal review also matters, especially around copyright, training data concerns, commercial usage rights, and internal governance for generated assets.

Cost should be measured at the asset level rather than in abstract terms. In some cases, AI-assisted generation lowers production costs for concept exploration and early testing. In others, the need for retouching, prompt iteration, and approval control can reduce that advantage. The strongest implementation usually appears when AI handles speed and variation, while human-led art direction remains responsible for brand judgment, final selection, and production-critical decisions.

Diffusion Models vs GANsDiffusion Models vs GANs

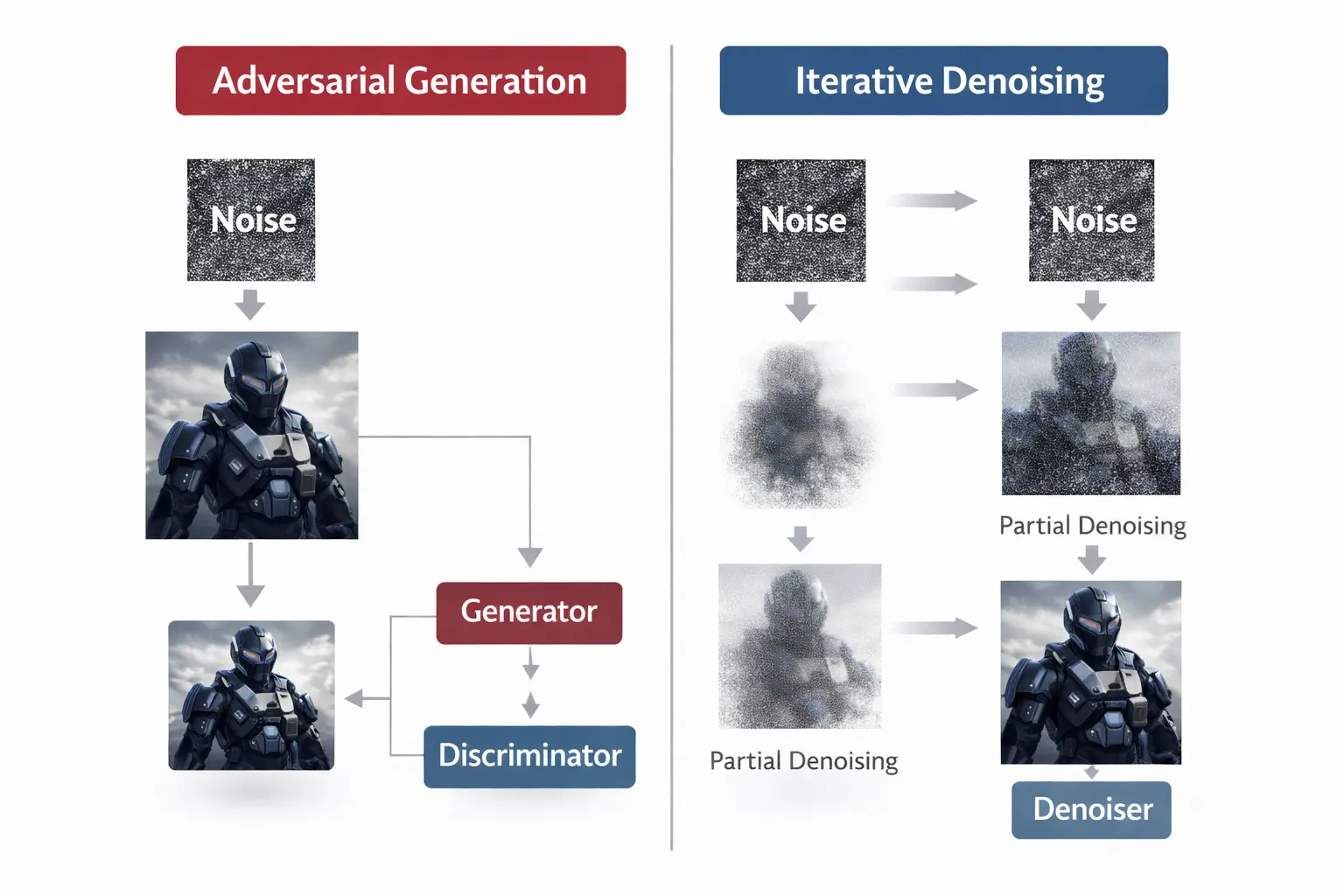

The debate around diffusion models vs GANs is not really about one side winning every task. It is about tradeoffs between training dynamics, sampling speed, controllability, and the kind of outputs a project needs.

In a classic GAN vs diffusion models comparison, GANs are usually faster at generation because they often produce outputs in one forward pass. Diffusion methods usually take more steps, but they are often easier to train and easier to guide.

Key Differences in One ExplanationKey Differences in One Explanation

For a short answer, diffusion models vs GANs comes down to method and behavior. GANs learn through competition between generator and discriminator, while diffusion methods learn through gradual corruption and denoising, which usually produces more stable training and more controllable outputs at the cost of speed.

| Feature | Diffusion approach | GAN approach |

| Training dynamic | Learns denoising over many timesteps | Learns through adversarial competition |

| Stability | Usually more stable | Can be fragile or collapse |

| Sampling speed | Slower, often iterative | Faster, often direct |

| Control | Strong with prompts and conditions | Often less flexible |

| Visual fidelity | Excellent detail and realism | Strong, but varies by setup |

| Editing tasks | Natural fit for inpainting and guided changes | Possible, but often less seamless |

GANs still matter in cases where low-latency generation is essential. They also remain historically important because they pushed synthetic image quality forward before denoising methods became practical at scale.

The rise of large prompt-driven generators, however, has made image generation AI increasingly associated with diffusion-based pipelines. That shift reflects not only output quality, but also the broader usefulness of guided sampling and editing.

Why Diffusion Models Matter

Why do these methods matter now? The short answer is that they helped make generative AI practical across creative, research, and product settings by combining quality, flexibility, and controllability in one framework.

They also changed expectations around synthesis. A single prompt-conditioned model can now generate, edit, expand, or transform content instead of serving one narrow task in isolation. That broader production mindset is increasingly visible in workflows that combine real-time environments, digital assets, and interactive presentation in a single pipeline.

That shift is why generative AI products in imaging, design assistance, multimodal interfaces, and scientific discovery increasingly rely on denoising-based generation. The method is not perfect, but it has become one of the clearest organizing ideas in the field.

At a strategic level, diffusion models matter because they turn a complex research concept into a reusable foundation. Whether future systems become faster, more multimodal, or more agentic, the logic of progressive denoising will remain central to how many generative pipelines are built. For brands and studios evaluating where this is going next, broader success stories often reveal the real pattern: value comes not from using AI for its own sake, but from combining the right generative method with a reliable production process.

Turn Ideas Into Visual Stories

Frequently Asked Questions

A diffusion model is a generative system that learns to restore structure from noise. During training, the model sees clean data that is gradually corrupted, and it learns how to reverse the corruption step by step. During sampling, it begins with random noise and repeatedly denoises it until a coherent result emerges. The result can be an image, audio segment, video clip, or other structured output.

The easiest explanation of how diffusion models work is that they learn a reversible path between clean data and noise. First, a forward process adds noise across many timesteps. Then a trained network predicts the noise present at each stage and removes it during sampling. Repeating that correction sequence many times turns an initially random input into a plausible sample that matches the learned data distribution.

They are not universally better, but they are often easier to train and easier to control. A GAN depends on a delicate balance between two competing networks, while denoising-based generation learns from a more direct objective. That usually improves stability and makes tasks such as prompt guidance, inpainting, and iterative refinement more natural. GANs can still be stronger when fast generation is the top priority.

These models are used in text-to-image generation, visual editing, video synthesis, speech and audio generation, molecule design, super-resolution, and data restoration. In creative work, they help with concept development, style exploration, asset variation, and compositional control. In technical settings, they can model uncertainty, reconstruct missing information, and generate candidate structures that would be expensive to produce manually.

Latent diffusion refers to performing denoising in a compressed internal representation instead of directly in pixel space. That makes generation much more efficient because the model works on smaller tensors while still preserving the essential structure of the target image. After denoising is complete, a decoder turns the latent result back into a full image. This approach helped make large-scale text-to-image generation practical.

They can be, especially at high resolution or when many denoising steps are used. Training usually requires substantial compute, large datasets, and careful tuning. Inference is cheaper than training but can still be resource-intensive compared with one-pass generators. Latent-space methods, faster samplers, quantization, and hardware-specific optimization reduce cost, but production-scale deployment still needs thoughtful engineering and budget planning.